How to Use AI to Audit AI: My Voice Agent Quality System

How to Use AI to Audit AI (And Why Your Voice Agent Needs It)

I manage the marketing for a handyman business in Utah. The owner is usually on a ladder or driving to a job site when the phone rings. So we set up a Voice AI agent through GoHighLevel to answer his calls — take the caller's name, confirm their number, ask what they need, and arrange a callback.

It works. But "works" and "works well" are two different things.

Wait — If It's AI, Why Does It Need Auditing?

Fair question. If the AI is smart enough to answer the phone, shouldn't it be smart enough to do it right?

Here's the thing. The Voice AI agent is good at following instructions. That's what it does — you give it a prompt, it follows it. But it can only follow what you told it. If your prompt doesn't say "don't assume what the caller needs," the agent will happily assume. If your prompt doesn't say "read phone digits one at a time," it'll say "fifty-four thirty-three" instead of "five four three three."

The agent isn't broken. The prompt is incomplete. And the only way to find the gaps is to listen to real calls and compare what happened to what should have happened.

That's tedious work for a human. But it's the perfect job for a second AI — one that reads the transcript, reads the prompt, and says "hey, line 4 says to do X, but on this call you did Y instead." AI auditing AI. One generates the conversation, the other quality-checks it.

The Problem: Nobody's Listening

The Voice AI handles the calls. But who's checking the calls? The owner's busy swinging a hammer. I'm not going to sit and listen to every recording. So the AI agent is out there on its own, and if it develops bad habits, nobody catches it until a customer complains — or worse, just doesn't call back.

I found out the hard way. A woman named Sarah called and said "I'm looking for someone to help me do assembly." The AI immediately responded with "We can definitely help with furniture assembly!" She meant a shed. The AI just guessed. And it guessed wrong.

That's the kind of thing that makes a caller feel like they're talking to a robot that isn't really listening. Which, to be fair, is exactly what happened.

The Fix: Audit Every Call With AI

Here's what I built using Claude Code. The whole thing runs from the terminal.

Step 1: Pull the transcript. GoHighLevel stores every Voice AI call transcript. I pull it through their API — the caller's words, the agent's words, turn by turn.

Step 2: Pull the current prompt. The Voice AI agent runs on a system prompt — instructions for how to greet callers, what questions to ask, what order to ask them, what never to say. I pull that through the API too.

Step 3: Send both to an LLM. I hand the transcript and the prompt to GPT-4o and say "audit this call against these instructions. Be harsh. Catch everything."

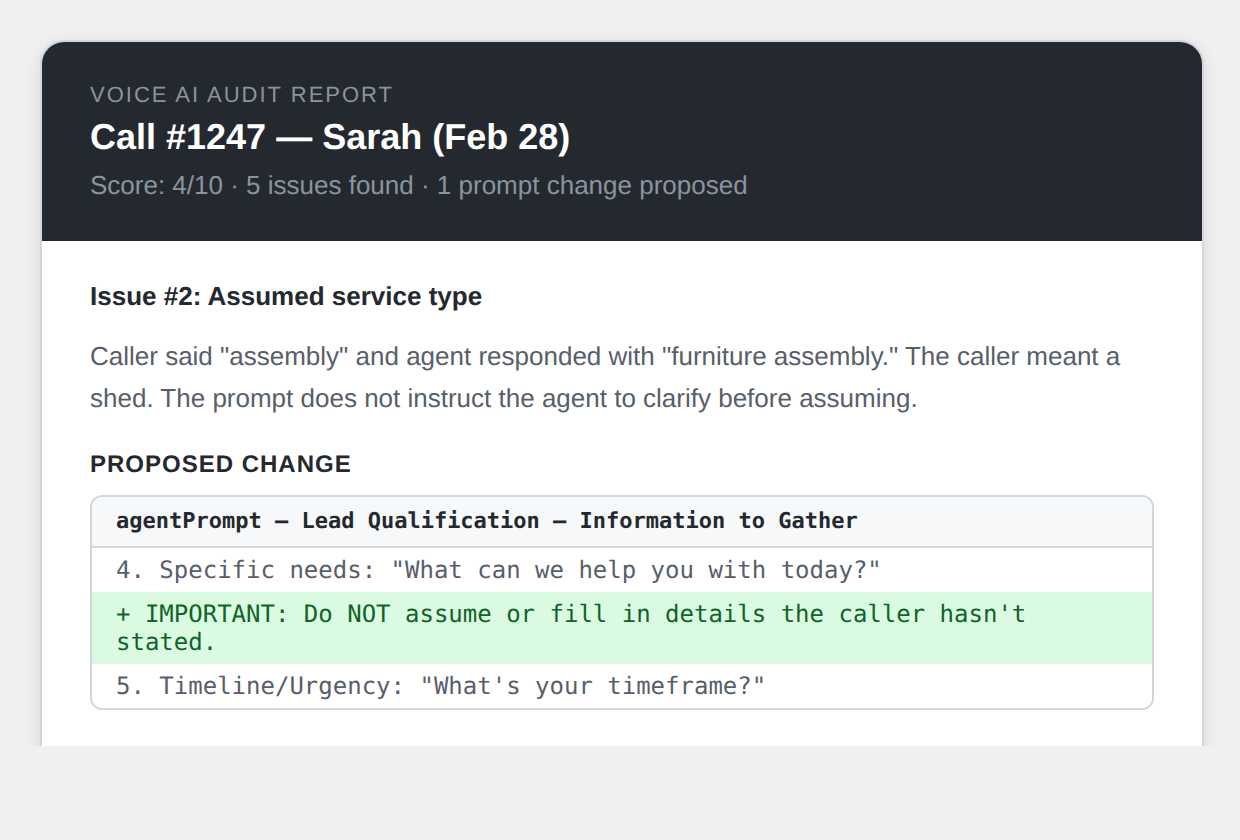

Step 4: Get a scored report. The LLM comes back with a line-by-line breakdown — what the agent did right, what it got wrong, exact quotes from the transcript, severity ratings, and proposed prompt fixes.

Step 5: Email the results. The audit lands in my inbox as an HTML email with GitHub-style diffs showing exactly what should change in the prompt. Green lines for additions, red lines for deletions. One change at a time.

What the Auditor Catches

I ran it on Sarah's call. Without any hints from me, the LLM caught five issues:

-

The greeting deviated from the script. The prompt has an exact greeting. The agent changed words — "we think is better" instead of "we hope you'll find better." Small, but if you wrote a script, you want the script.

-

Assumed the service type. Sarah said "assembly" and the agent jumped to "furniture assembly." She never said furniture. The prompt didn't tell the agent not to assume.

-

Promised things it shouldn't. "We can definitely help with that." The agent has no idea if the owner can do the job, if he's available, or if he even wants the work. Its job is to take a message, not make commitments.

-

Split the phone confirmation into two questions. "Could you confirm the best phone number to reach you? I see the number ending in 7823 — does that work for you?" That's two questions when it should be one.

-

Scored it 4 out of 10. Harsher than I would have been, but probably more honest.

The beauty is that every issue the LLM finds becomes a prompt fix. And every prompt fix makes future calls better. The system gets smarter with every call it audits.

The Diff: How Changes Get Tracked

This is my favorite part. When the audit proposes a change, I see it as a GitHub-style diff — the exact section of the prompt, with the old text and new text side by side.

Here's a real example. After the Sarah audit, I added this instruction:

One line. That one line prevents the agent from ever assuming "furniture assembly" again. I review the diff, approve it, and it gets pushed to the live agent through the API. The change is also saved to a version-controlled file in the repo, so I have a full git history of every prompt tweak and why it was made.

10 Changes From Two Calls

After auditing just two calls — Sarah and Mike — I pushed 10 prompt improvements:

- Don't assume the service type

- Try once for a last name (don't nag if they won't give it)

- Phone confirmation in one sentence, not two

- Don't promise "we can definitely help"

- Sound-alike city names (so "Mason" gets clarified to "their city")

- City question tightened to one sentence

- Use the caller's name max three times (seven times in one call was too many)

- Read phone digits individually ("five four three three" not "fifty-four thirty-three")

- Say "your project" in the closing instead of re-describing the work

- Always ask "how did you hear about us?"

Each one came from a real call, a real problem, a real moment where the agent did something a human wouldn't. And each one is tracked in git with a clear reason.

The Cycle: How the Prompt Evolves

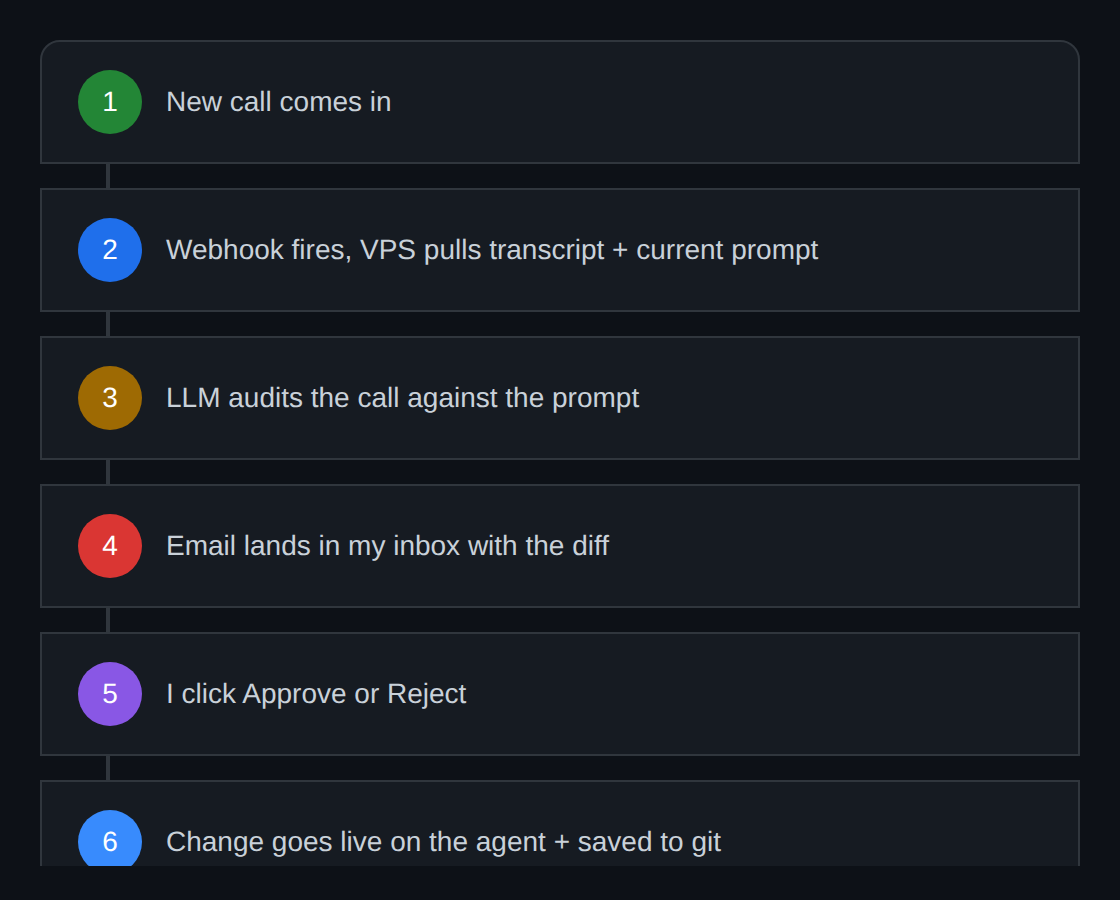

Here's the mental model. It's a loop that runs on every call:

Claude sits in the middle with MCP connections to the CRM. Every step in the cycle — pulling the transcript, reading the prompt, running the audit, proposing changes, pushing updates — goes through that central brain. The CRM is both the data source and the destination. And the prompt is the living document that gets smarter with every revolution of the cycle.

The Automated Version

Right now I'm running this manually from Claude Code. But here's where it's headed:

Every call. Audited automatically. No human listening to recordings. No issues going unnoticed for weeks.

The email is the key. Here is roughly what it looks like:

I hit Approve. That one click triggers two things: the prompt gets updated on the live Voice AI agent through the API, and the change gets committed to the version-controlled prompt file in the repo with the audit reasoning in the commit message. One click, and the agent is smarter. If the change does not make sense, I hit Reject and it gets logged but nothing changes.

The human stays in the loop, but only for five seconds. Read the diff, click a button. The AI did the listening, the analysis, the writing, and the formatting. I just decide yes or no.

The pieces all exist. GoHighLevel has workflow triggers for call events. The VPS has a webhook receiver. The Gmail integration can send HTML emails. The GoHighLevel API can read and update the Voice AI prompt. It is just wiring at this point.

Why This Matters Beyond Handyman Calls

This pattern works for any business running a Voice AI agent. The core loop is:

- You have a prompt that tells the AI how to behave

- You have transcripts that show how it actually behaved

- An LLM compares the two and finds the gaps

- You fix the gaps, and the prompt gets better

The prompt is a living document. It evolves with every call. And because everything is tracked in git, you can see the full history — what changed, when, and why. That's the real value. Not just catching mistakes, but building institutional knowledge into the prompt itself.

Every caller who talks to the AI is unknowingly making it better for the next caller. Sarah's shed assembly mix-up means the next person who says "assembly" gets asked what they need assembled. Mike's call where the agent used his name seven times means the next caller gets a more natural conversation. The system learns.

That's what I'm building. Not a dashboard. Not a report nobody reads. An AI that watches the AI and tells me exactly what to fix.

See Also

We are an independent affiliate of HighLevel and may earn a commission if you sign up through links on this page. We are not employees or representatives of HighLevel.

Some links in this article are affiliate links. If you purchase through them, we may earn a commission at no extra cost to you. This helps support our content.

This article blends original content, AI-assisted drafting, and human oversight. How I write.

Stay Updated

Get notified when new content is published.

No spam. Unsubscribe anytime.